The Future of Live Coding Evaluations

November 12, 2025

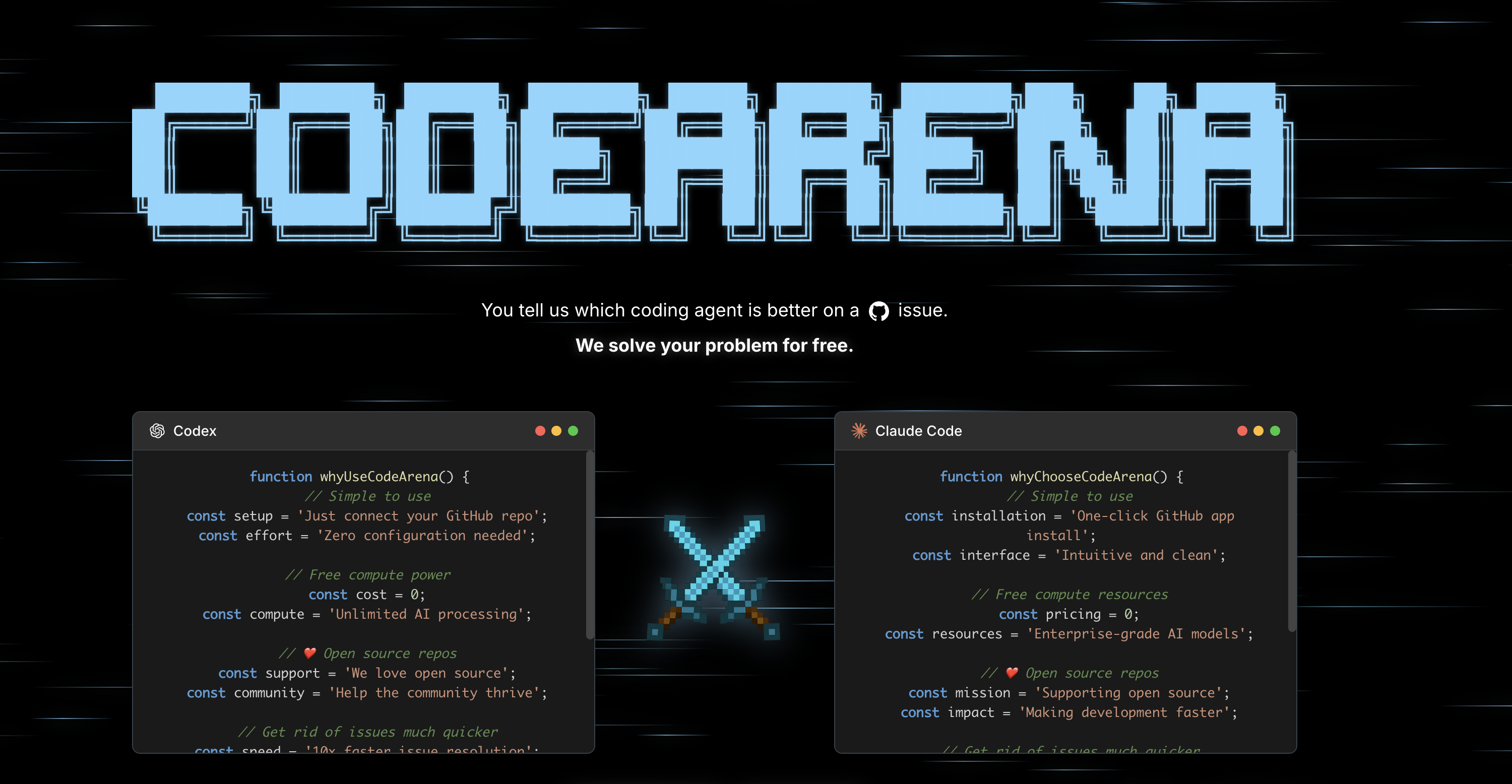

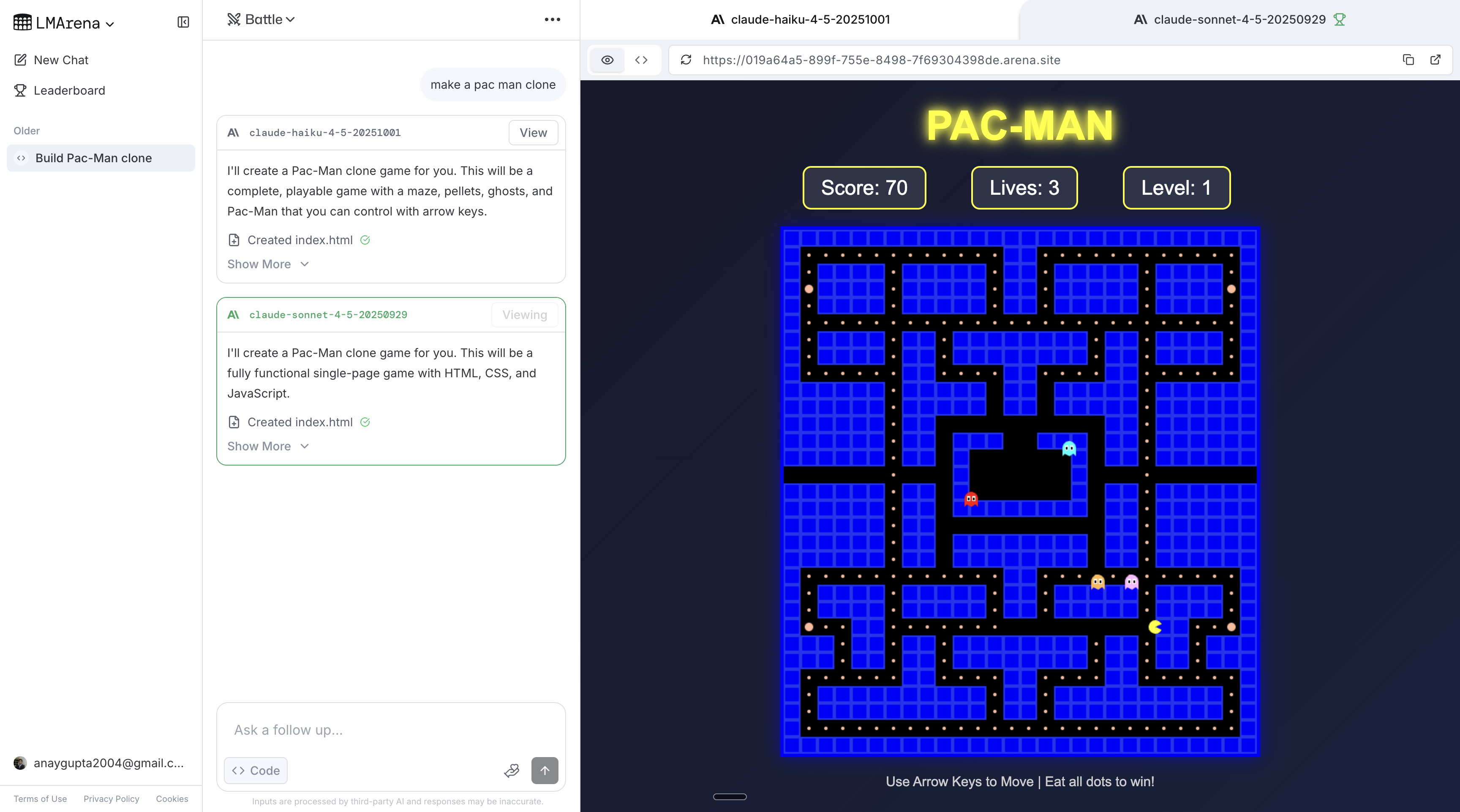

Recently, I spent some time working on Codearena, a platform to A/B test coding agents (e.g. Codex, Claude Code) for free and let them compete to solve your Github issues. You could tag the GitHub bot (@codearena-bot) on any public Github repository after installing our app to receive free PRs on your issues.

I've also recently had the amazing opportunity to chat in-depth with Wei-Lin Chiang, co-founder of LMArena, about Codearena, what the best form factor would be for A/B testing model/agents in real codebases, and where LMArena is heading. I wanted to use this blog post to focus on the different form factors you could implement to make a better codearena.

TLDR

There are four main form factors that are the most promising:

- VSCode Extension - highest friction to use, great UI/UX for displaying changes across different model/agent outputs

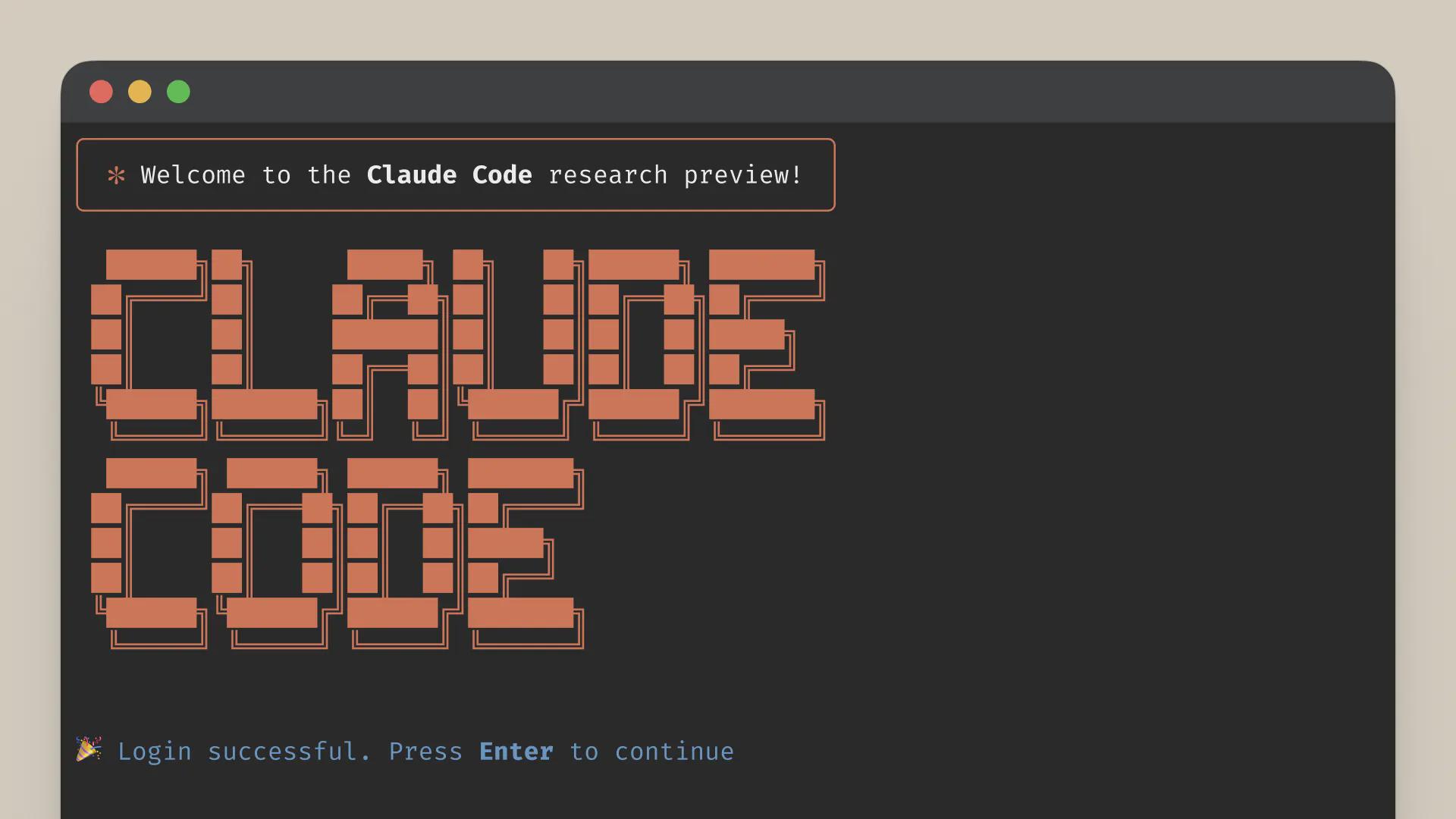

- CLI - low friction to use, intuitive to use for developers, hard to make UI/UX for applying/displaying changes across different model/agent outputs

- Web - lowest friction to use, harder to convince hardcore full-stack/backend to use (unless for web dev + more design heavy engineers + vibecoders)

- Github bot - very similar to the existing Codearena

The best option in my opinion is to develop a CLI. It's the best medium meeting developers where they want to code (on their own machines) and having a low barrier to entry (just a package install vs installing a VSCode Extension/IDE). People switch between Codex and Claude Code all the time, however, the stickiness of Cursor is much higher. The runner up would be the web interface; I think this represents the future as developers become more hands-off and asynchronous coding agents such as background agents become the norm.

A big caveat is that all of these form factors also contribute a different flavor of evaluations: Github bot captures how good are frontier models at solving production bugs, the existing Code Arena in LMArena largely captures the design taste of a model for web development tasks, a CLI/extension captures how good models are at iterative development tasks...

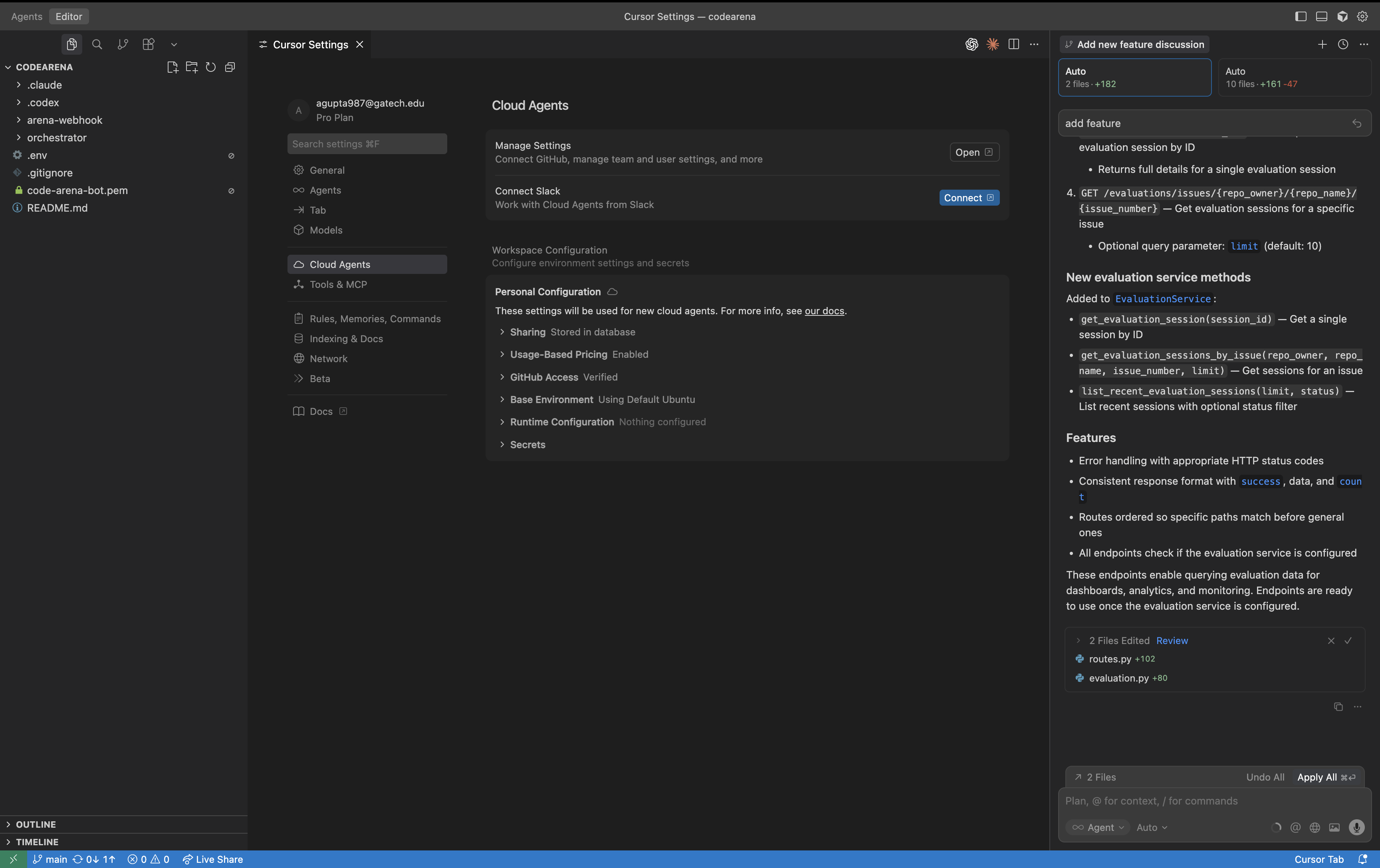

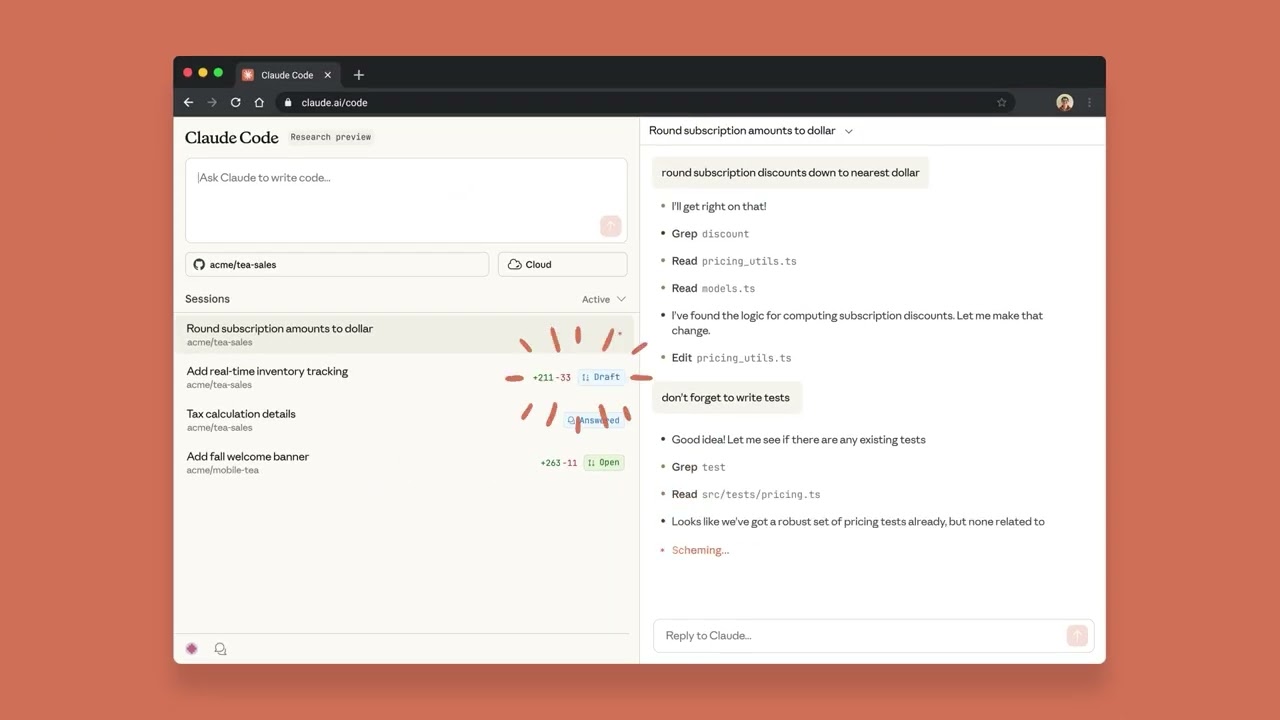

Form Factor 1: VSCode Extension

This form factor is exactly like Cursor 2.0. This version allows you to specify how many models/agents you want to run at once, and then it spawns all of them to complete a given task. This is what you see on the right hand side - if you click on an agent, it shows the output for that given agent applied on your codebase. It is the most intuitive UI/UX that abstracts the complexity of Git worktrees away from the user.

The workflow is simple:

- You click between the agents.

- Test it yourself to see if it works.

- Apply the best code changes.

- Repeat. ↻

Super simple!

The downsides of this approach are also fairly obvious. The activation energy for a dev to install an extension in VSCode/Cursor is high (at least compared to the other form factors), especially if they are already subscribed to Cursor, but it is not impossible to convince a dev to use this for free.

Form Factor 2: CLI

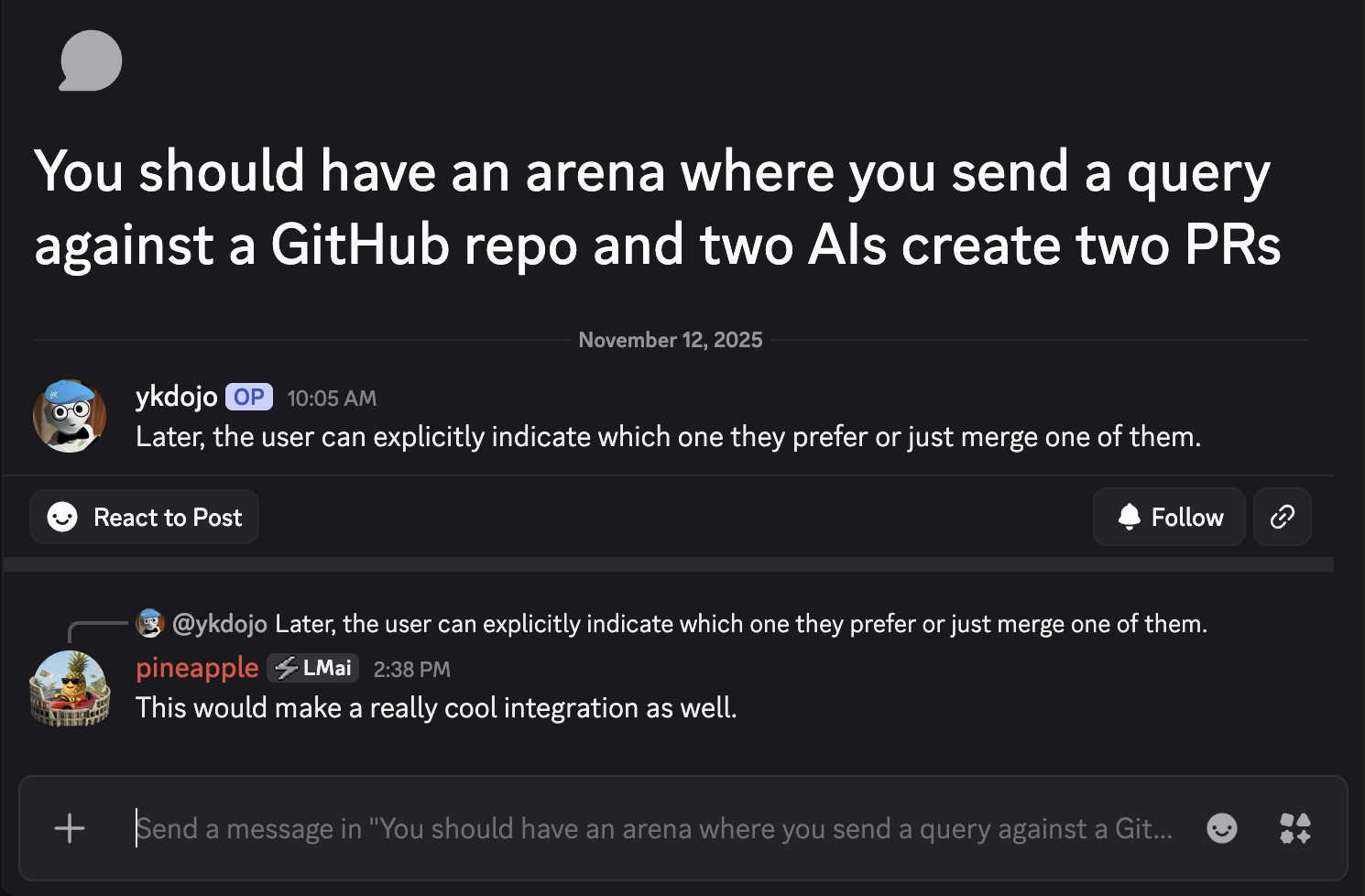

Interestingly enough, there seems to be demand for this already in the LMArena Discord: a CLI where you have 2 outputs instead of 1 for each feature request that you have.

Each prompt would result in 2 different features similar to the VSCode Extension, you could use the left (← key) and right key (→ key) to apply each model's changes to your codebase. The user could test the changes on their codebase on their own machine. And then click enter (↵) on the selected side to use an output for the next prompt.

The workflow would be:

- User submits prompt to CLI.

- User switches between model outputs using arrow keys (← / →).

- Test the changes on their own machine.

- Press enter (↵) to apply the selected model's output.

- Repeat. ↻

The CLI approach offers several advantages. It meets developers where they already work - in the terminal - and has a much lower barrier to entry than a full IDE extension. Developers can simply install a package and start comparing model outputs immediately. The challenge lies in creating an intuitive interface for viewing and applying changes across different model outputs without the rich visual interface that an IDE provides.

Form Factor 3: Web

LMArena recently launched their own Code Arena on the web. It works similar to how you would use Lovable or Replit.

The workflow is straightforward:

- User submits prompt in the web interface.

- Models generate competing implementations.

- Select the preferred implementation.

- Repeat. ↻

However, this flavor of the web form factor is geared mostly towards web development. One could add a "Connect to Github" button and expand the functionality to achieve something like Anthropic's Claude Code on the Web to be positioned towards more developers.

But both approaches in their current state (to the best of my knowledge) are not appealing for hardcore developers. These mediums for the time being are better suited for vibecoders, prototyping, experimentation — albeit still an extremely useful benchmark that tells you a lot about where models stand.

Form Factor 4: Github Bot

This is exactly the Codearena implementation. This flavor of evaluation captures how good frontier models are at solving production bugs in real-world codebases.

The workflow also does not have too much friction:

- Install the Github App.

- Tag @codearena-bot on a Github issue.

- Review the competing solutions and select the best PR.

- Repeat. ↻

Why didn't Codearena blow up?

In practice, it seems like a great idea! OSS maintainers have hundreds of issues that need to be solved and we can provide solutions at scale for free. A win-win situation.

Someone recently suggested the idea of Codearena in the LMArena Discord feedback channel.

Except, there are problems.

There are two categories of OSS maintainers with many issues:

- Hobbyists maintaining passion projects - These maintainers want high-quality PRs that understand their project's architecture, coding standards, and conventions. They don't want to wade through a bunch of broken, bloated code. Current models struggle to consistently deliver this quality, especially on first try without iteration.

- Corporate-backed OSS repositories - These teams care primarily about output. They have the volume of work that could benefit from automated solutions, but still need PRs that meet their quality bar.

Additionally, the Github bot form factor introduces friction in the feedback loop. If a model's solution isn't quite right, there's no easy way to iterate within the Github/Codearena interface. Developers either accept the PR as-is, manually fix it, or reject it entirely.

We also should have stuck with our product longer and done better marketing to validate our concerns.

Final Thoughts

The best option (in my opinion) is a CLI that developers can install locally that provides the right balance of low friction and powerful functionality.

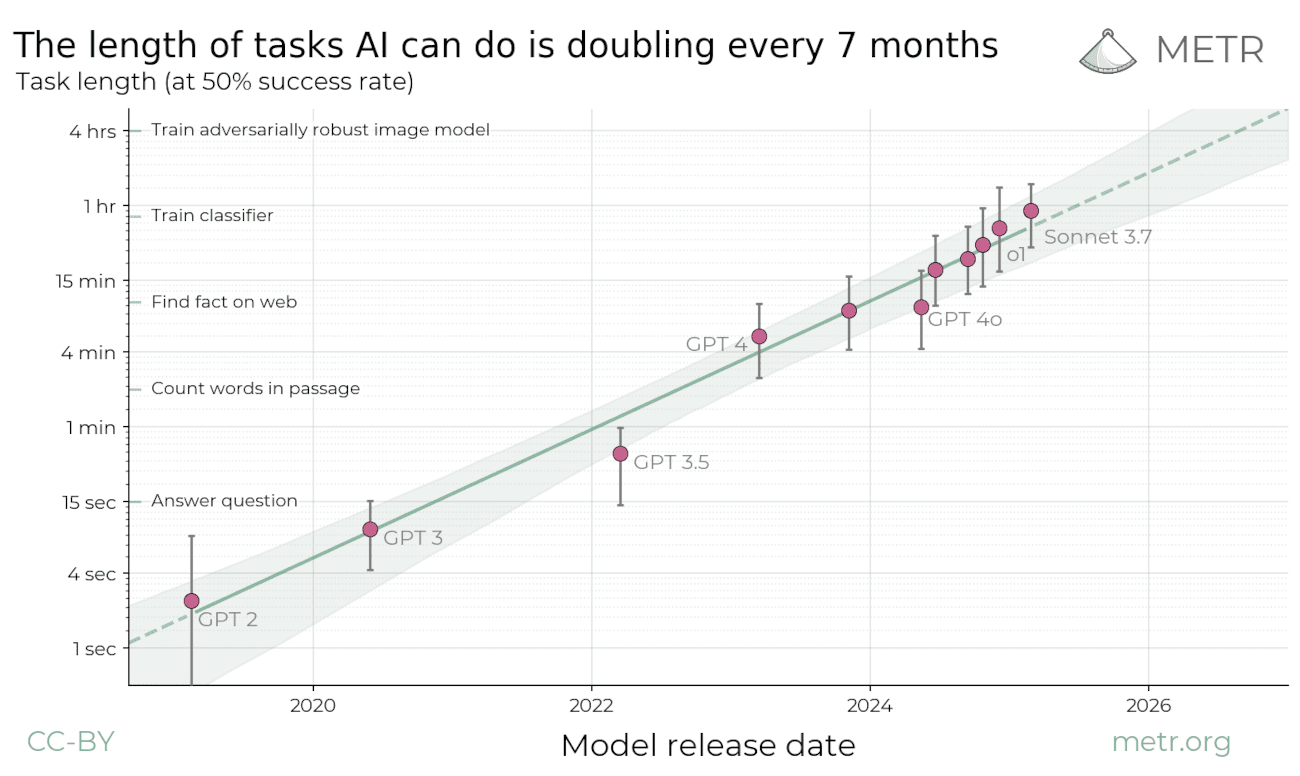

Source: METR autonomous AI capabilities benchmarks

As model capabilities rise, they'll handle longer tasks more reliably. We've already seen this with Cursor: individual queries → agent mode → background agents. Once models can reliably complete multi-day coding tasks in production codebases, even hardcore developers might prefer the web interface.

Right now, developers care most about reliability at scale. Code quality isn't there yet, which is why the feedback loop matters. But this will change. The key takeaway: think one to two steps ahead when building coding evaluations. Different form factors capture different capabilities, and the ideal platform probably incorporates multiple approaches.

This post reflects my thoughts on building better evaluation frameworks for coding agents. If you're working on similar problems or have thoughts on this, I'd love to chat.